Some commonalities between interactive versus non-interactive proof systems

In today's post, I'd love to share some brief learnings regarding non-interactive and interactive (probabilistic) proof systems.

But first, we have to resolve some confusion.

What do you mean by interactive?

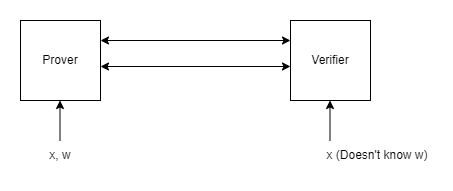

Proof systems allow a prover to convince a verifier of some statement. Such as "I know the private key behind this Bitcoin address" or even "I know what you did last summer" .Interactive Oracle Proofs are one way to formally model proofs, and as the name suggests, they allow for interaction between the prover and verifier. I.e., they allow for messages to be sent between the prover and verifier, in order to enhance soundness of the proof. However, this interaction only exists on a theoretical level. In practice, provers can leverage the Fiat-Shamir Transform or Fischlin's Transform, in order to "simulate" a verifier's random challenges, allowing the prover to send only a single message to the verifier. Most proof systems of this type are called SNARKs (Succinct Non-Interactive ARguments of Knowledge).

However, there are also proving systems which actually do require interaction, in practice. A clean model which has seen a lot of progress recently is Vector Oblivious Linear Extensions. These allow for very fast low memory footprint proving, but actually, in practice, require interaction between a prover and verifier. Having low latency and high bandwidth therefore becomes crucial to the effective performance of such proofs. Due to the requirement of actual interaction, they are also Designated Verifier: only a single verifier at a time has the information to assess that a proof is honest.

Both SNARKs and VOLE-based proofs allow for zero-knowledge evaluation: an arbitrary part of the statement to be proven can be hidden from the verifier.

However cool the properties of SNARKs, I'd argue that the majority of statements which need to be proven in the world are between two parties. Whether you like it or not, our society is made up of individual people and individual organisations. So as more and more proofs are made in the real world, VOLE may also play a key role.

Which software methods exist to optimize SNARKs and VOLE-based proofs?

Optimizing the statement to prove within the proof system

Both SNARKs and VOLE-based proof system families operate over a circuit abstraction, a flattened representation of the computation consisting of primitive operations (add and mul) or polynomials. In either family, it is worthwhile to reduce the size of the circuit and reduce the size of the actual statement to prove.

Fortunately, computations can be represented in many ways. The compiler toolchains which build our software are great examples of how computations can be manipulated and optimized, without losing their meaning.

Proof system compilers can go a step further than traditional compilers. We can optimize statements so constraints do not represent the trace of a literal computation (analogous to imperative programming), but rather check that a computation has been performed correctly (analogous to declarative programming). Let's look at a simple example: what if we want to prove the computation a / b = c. In most proof systems, encoding a division is computationally expensive, but encoding a multiplication is cheap. Therefore, we can transform the computation to a = c * b and voilá, achieved a massive speedup.

One particularly useful technique is to put portions of the statement into a virtual lookup table. The ultimate hack, which has been used in hardware and software since the dawn of programming. For one instantiation of SNARK lookups, you can check out this great intro to LogUp. In the VOLE literature, most lookups show up in the form of accessing RAMs or ROMs, which is useful for the "Virtual Machine proving" discussed further below.

Batch proving independent statements

Most proof systems know some form of batch proving for statements which have no dependencies on each other. There is often some fixed cost which needs to be paid for proving, so if we can amortize this across 10 or 100 proofs, this reduces the resource cost per proof.

Accumulation and recursion

Accumulation and recursion are two methods to achieve Incrementally Verifiable Computation (IVC) and its generalization Proof Carrying Data (PCD).

Recursion refers to ensuring your statement includes the verification of a previous proof. A classic use of recursion is to use one proof system for a fast prover and wrap it in Groth16 to make it succinct. This works very well in the world of SNARKs, but because VOLE-based proof systems require actual interaction, they can only function as "the last stop" in a chain of proofs.

Accumulation refers to, surprise, accumulating several statements and proving them all at once. It is similar to batch proving, with the exception that statements can depend on each other. Existing techniques to achieve accumulation either consist of homomorphic vector commitments or performing spot-checks over error-correcting encodings of the committed vectors. This makes sense in the SNARK world, where you might want to accumulate lots of cheap operations before "finishing it off" with an expensive succinct proof. In the VOLE world, such accumulation is not typically waranted, because it already uniformly uses cheap operations. In theory, one might be able to encode the final accumulation check inside of a VOLE-based proof instead of a SNARK proof, if your goal it to achieve a faster prover.

One can also verify other proof systems using the commit-and-prove paradigm, including for example sigma protocols for delegating fast elliptic curve arithmetic.

Virtual Machine proving

Now we're getting more and more into the weeds. Instead of our statement encoding a computation, we can let the statement encode a virtual machine like RISC-V, consisting of just some primitive operations such as addition and multiplication. Proving our computation is now a matter of proving a series of steps of a VM. While the main benefit of this is simplicity and not proving time, given that it makes the statement to prove uniform, it opens the avenue towards easier hardware standardization, batching and accumulation.